|

10/31/2023 0 Comments Meta learning maml

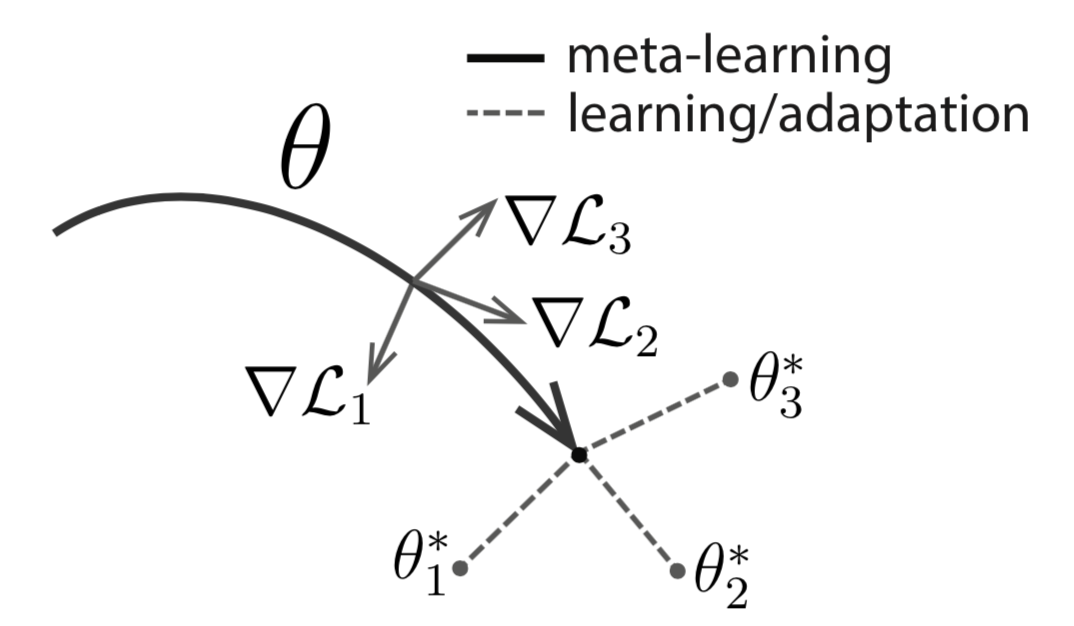

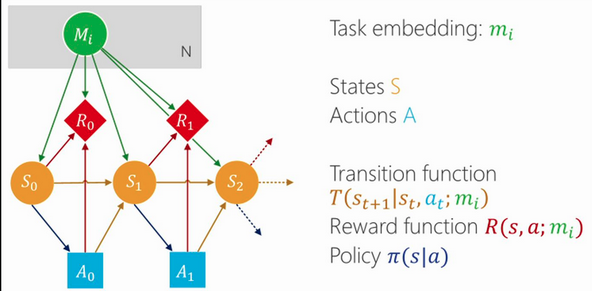

The mean accuracy from 6,000 trials is more stable, and is always ~2% lower than that from the first 600 trials. We found that 600 trials, again in the transductive setting, are insufficient for an unbiased estimate of model performance. The paper reports mean accuracy over 600 independently sampled tasks, or trials. We found that the accuracy dropped by ~1.5% given five queries per category, and by ~2.5% given 15 queries per category. For example, the result for 5-way-1-shot classification on miniImageNet from the paper (48.70%) was obtained on five queries, one per category. Unfortunately, this is not immediately obvious from the paper, and our findings suggest that the performance of MAML is hugely overestimated.Īccuracy is very sensitive to the size of query set in the transductive setting. This is a very restrictive assumption and does not land MAML directly comparable with the vast majority of meta-learning and few-shot learning methods. The implicit assumption here is that test data come in mini-batches and are perhaps balanced across categories. The batch normalization layers do not track running statistics at training time, and they use mini-batch statistics at test time. The official implementation assumes transductive learning. In our experiments, gradient checkpointing saved up to 80% of GPU memory at the cost of running the forward pass more than once (a moderate 20% increase in running time). Gradient checkpointing trades compute for memory, effectively bringing the memory cost from O(N) down to O(1), where N is the number of inner-loop steps. MAML is very memory-intensive because it buffers all tensors generated throughout the inner-loop adaptation steps. With our implementation, one may opt to learn a meta-initialization for the encoder while initializing the classifier head at zero.ĭistributed training and gradient checkpointing. This prevents one from varying the number of categories at training or test time. The official implementation learns a meta-initialization for both the encoder and the classifier head. Meta-learning with zero-initialized classifier head. One may freeze an arbitrary set of layers or blocks during inner-loop adaptation. We provide an interface for layer freezing experiments. We support the standard four-layer ConvNet as well as ResNet-12 and ResNet-18 as the encoder.Įasy layer freezing. The official implementation uses vanilla gradient descent in the inner loop. We support mutiple optimizers and learning-rate schedulers for the outer-loop optimization. More options for outer-loop optimization.

We support mini-ImageNet, tiered-ImageNet and more. We also implement data batching and support/query-set splitting more efficiently. We support data normalization and a variety of data augmentation techniques. The official implementation does not normalize and augment data. Our implementation provides flexibility of tracking global and/or per-episode running statistics, hence supporting both transductive and inductive inference.īetter data pre-processing. Implementation Highlightsīatch normalization with per-episode running statistics. We highlight the improvements we have built into our code, and discuss our observations that warrent some attention. Other existing PyTorch implementations typically see a ~3% gap in accuracy for the 5-way-1-shot and 5-way-5-shot classification tasks on mini-ImageNet.īeyond reproducing the results, our implementation comes with a few extra bits that we believe can be helpful for further development of the framework. To the best of our knowledge, this is the only PyTorch implementation of MAML to date that fully reproduces the results in the original paper without applying tricks such as data augmentation, evaluation on multiple crops, and ensemble of multiple models.

This repository contains code for training and evaluating MAML on the mini-ImageNet and tiered-ImageNet datasets most commonly used for few-shot image classification. We faithfully reproduce the official Tensorflow implementation while incorporating a number of additional features that may ease further study of this very high-profile meta-learning framework. MAML in PyTorch - Re-implementation and BeyondĪ PyTorch implementation of Model Agnostic Meta-Learning (MAML).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed